gromo.modules.growing_module.MergeGrowingModule#

- class gromo.modules.growing_module.MergeGrowingModule(post_merge_function: Module = Identity(), previous_modules: list[MergeGrowingModule | GrowingModule] | None = None, next_modules: list[MergeGrowingModule | GrowingModule] | None = None, allow_growing: bool = False, tensor_s_shape: tuple[int, int] | None = None, device: device | None = None, name: str | None = None)[source]#

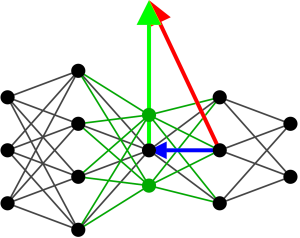

Module to connect multiple modules with an merge operation. This module does not perform the merge operation, it is done by the user.

- add_next_module(module: MergeGrowingModule | GrowingModule) None[source]#

Add a module to the next modules of the current module.

- Parameters:

module (MergeGrowingModule | GrowingModule) – next module to add

- add_previous_module(module: MergeGrowingModule | GrowingModule) None[source]#

Add a module to the previous modules of the current module.

- Parameters:

module (MergeGrowingModule | GrowingModule) – previous module to add

- compute_optimal_delta(update: bool = True, return_deltas: bool = False, force_pseudo_inverse: bool = False, dtype: dtype = torch.float32) list[tuple[Tensor, Tensor]] | None[source]#

Compute the optimal delta for each previous layer using current S and M tensors. dW* = M S[-1]^-1 (if needed we use the pseudo-inverse) Compute dW* (and dBias* if needed) and update the optimal_delta_layer attribute.

- Parameters:

update (bool, optional) – if True update the optimal delta layer attribute, by default True

return_deltas (bool, optional) – if True return the deltas, by default False

force_pseudo_inverse (bool, optional) – if True, use the pseudo-inverse to compute the optimal delta even if the, by default False matrix is invertible

dtype (torch.dtype) – dtype for S and M during the computation

- Returns:

optimal delta for the weights and the biases if needed

- Return type:

- compute_previous_m_update() tuple[Tensor, int][source]#

Compute the update of the tensor M for the input of all previous modules.

- Returns:

torch.Tensor – update of the tensor M

int – number of samples used to compute the update

- Raises:

NotImplementedError – abstract method

- compute_previous_s_update() tuple[Tensor, int][source]#

Compute the update of the tensor S for the input of all previous modules.

- Returns:

torch.Tensor – update of the tensor S

int – number of samples used to compute the update

- Raises:

NotImplementedError – abstract method

- compute_s_update() tuple[Tensor, int][source]#

Compute the update of the tensor S. Should be added to the type of layer.

- Returns:

torch.Tensor – update of the tensor S

int – number of samples used to compute the update

- Raises:

NotImplementedError – abstract method

- delete_update(include_previous: bool = False) None[source]#

Delete the update of the optimal added parameters.

- forward(x: Tensor) Tensor[source]#

Forward pass of the module. If needed, store the activity and pre-activity tensors.

- Parameters:

x (torch.Tensor) – input tensor

- Returns:

output tensor

- Return type:

torch.Tensor

- property input_volume: int#

Expected input volume

- Returns:

input volume

- Return type:

- Raises:

NotImplementedError – abstract method

- property number_of_parameters: int#

Get the number of parameters of the layer

- Returns:

number of parameters

- Return type:

- property number_of_predecessors: int#

Get the number of preceding modules

- Returns:

number of previous modules

- Return type:

- property number_of_successors: int#

Get the number of succeeding modules

- Returns:

number of next modules

- Return type:

- property output_volume: int#

Expected output volume

- Returns:

output volume

- Return type:

- Raises:

NotImplementedError – abstract method

- parameters(recurse: bool = True) Iterator[Parameter][source]#

Parameter iterator

- Parameters:

recurse (bool, optional) – use recursion, by default True

- Returns:

parameters iterator

- Return type:

Iterator[torch.nn.Parameter]

- property pre_activity: Tensor#

Get the pre activity of the layer

- Returns:

pre activity tensor

- Return type:

torch.Tensor

- projected_v_goal() Tensor[source]#

Compute the projected gradient of the goal with respect to the activity of the layer.

dLoss/dA_proj := dLoss/dA - dW B[-1] where A is the pre-activation vector of the layer, and dW the optimal delta for all the previous layers

- Returns:

projected gradient of the goal with respect to the activity of the next layer dLoss/dA - dW B[-1]

- Return type:

torch.Tensor

- set_next_modules(next_modules: list[MergeGrowingModule | GrowingModule]) None[source]#

Set the next modules of the current module.

- Parameters:

next_modules (list[MergeGrowingModule | GrowingModule]) – list of next modules

- Raises:

NotImplementedError – abstract method

- set_previous_modules(previous_modules: list[MergeGrowingModule | GrowingModule]) None[source]#

Set the previous modules of the current module.

- Parameters:

previous_modules (list[MergeGrowingModule | GrowingModule]) – list of previous modules

- Raises:

NotImplementedError – abstract method

- sum_out_features() int[source]#

Count total out_features of next modules

- Returns:

sum of next out_features

- Return type: